Joie Zhang

Princeton CS '26, MATS Scholar

Hi! I’m Joie Zhang (pronounced as “joey”). I’m a fourth-year CS undergraduate at Princeton, where I’m grateful to be co-advised by Prof. Danqi Chen and Prof. Peter Henderson. I am also a MATS Scholar mentored by Dr. Lewis Hammond.

My research focuses on alignment + safety and pragmatic long-context reasoning evals for LLM agents.

I’m particularly interested in investigating MARL for safety and safety for MARL: how we can use multi-agent RL to improve the adversarial robustness of language models? Conversely, how we can tackle new safety risks that emerge in multi-agent LLM systems? Recently, I’ve been thinking about pretraining safety, evaluation awareness, and how we can apply techniques from long-context language modeling to improve chain-of-thought faithfulness.

Additionally, I’m interested in rethinking long-context training, reasoning, and continual learning from the perspective of computer-use agents; what if we designed new training paradigms that more closely resembled multi-turn dialogues? Can language models balance parametric updates on new, truthful information while remaining robust to lies, satire, and prompt injections that may cause confusion and misalignment? Beyond relying on context compression and recursive prompting techniques, how can we make both pretraining and post-training more suited for long-context multi-turn agent workflows?

Outside of research, I lead AI@Princeton, which is Princeton’s largest undergrad organization focused on increasing AI literacy on campus. I’m also the Treasurer of Princeton Women in Computer Science, a CS teaching assistant, and a member of the Edwards Collective. When I’m not queueing jobs or reading outputs, I like to run, swim, and pet strangers’ dogs.

Email: [x]@princeton.edu where [x]=joie

research highlights:

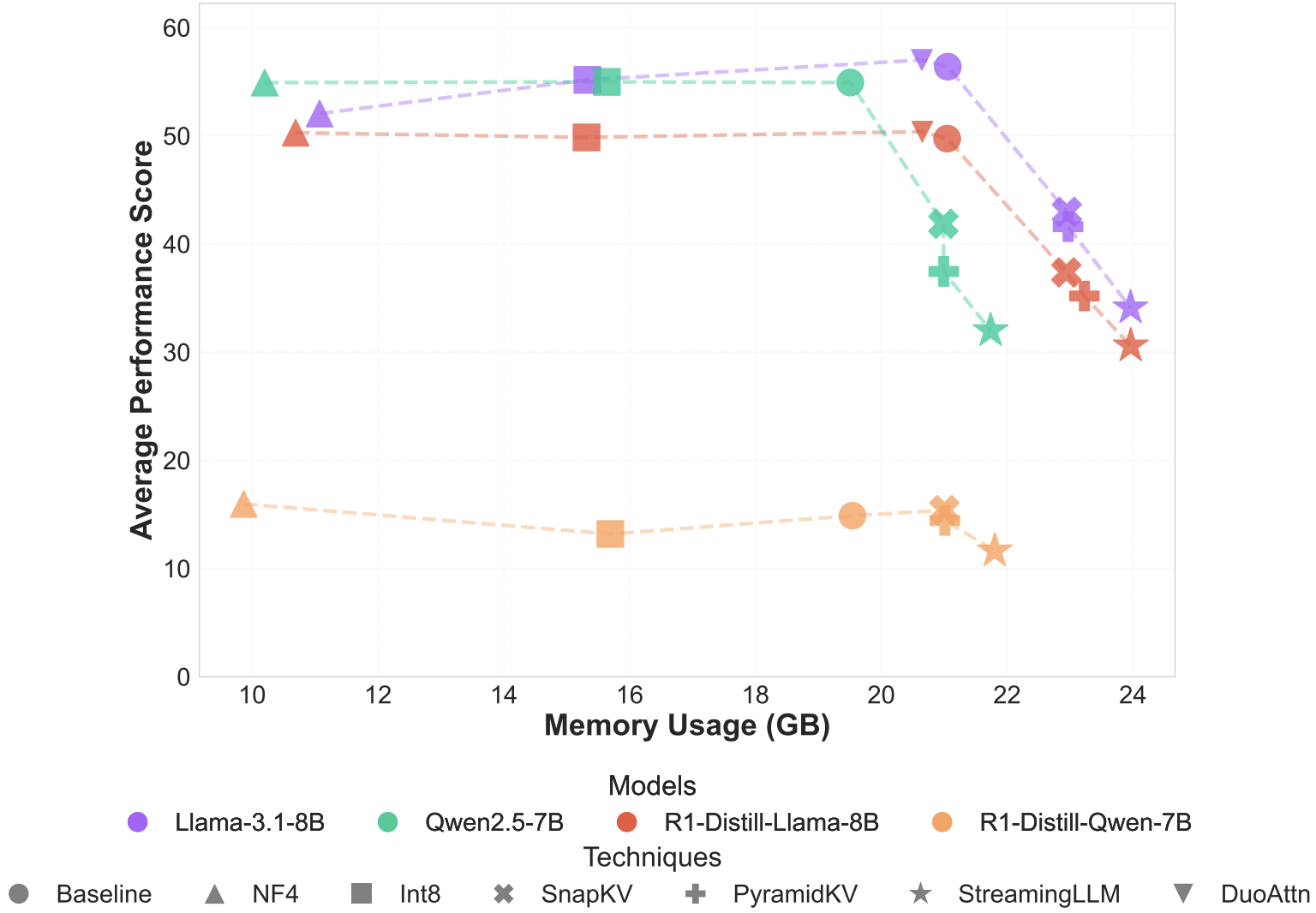

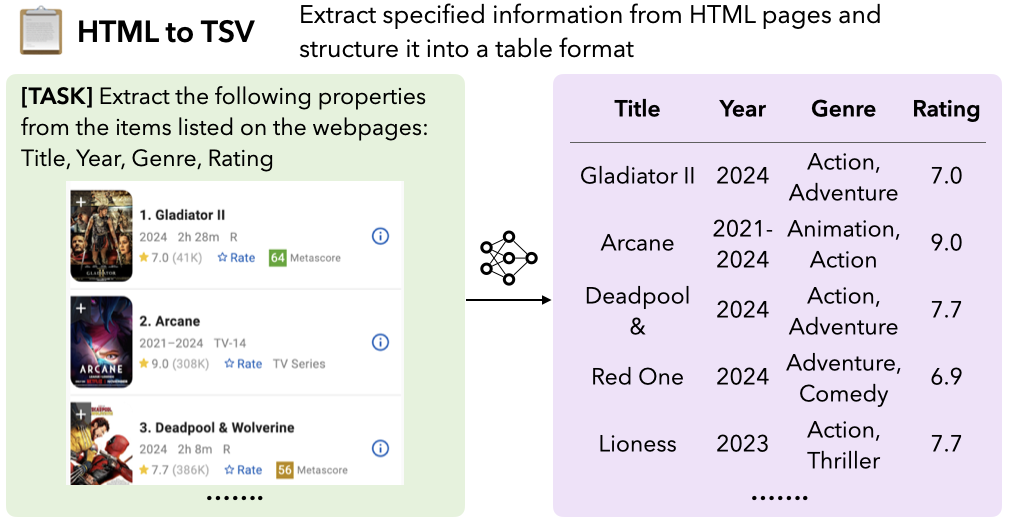

- Reasoning-Focused Long-Context Evals. LongProc (COLM, 2025), Reasoning-Focused Evaluation of Efficient Long-Context Inference Techniques (NeurIPS Efficient Reasoning Workshop, 2025)

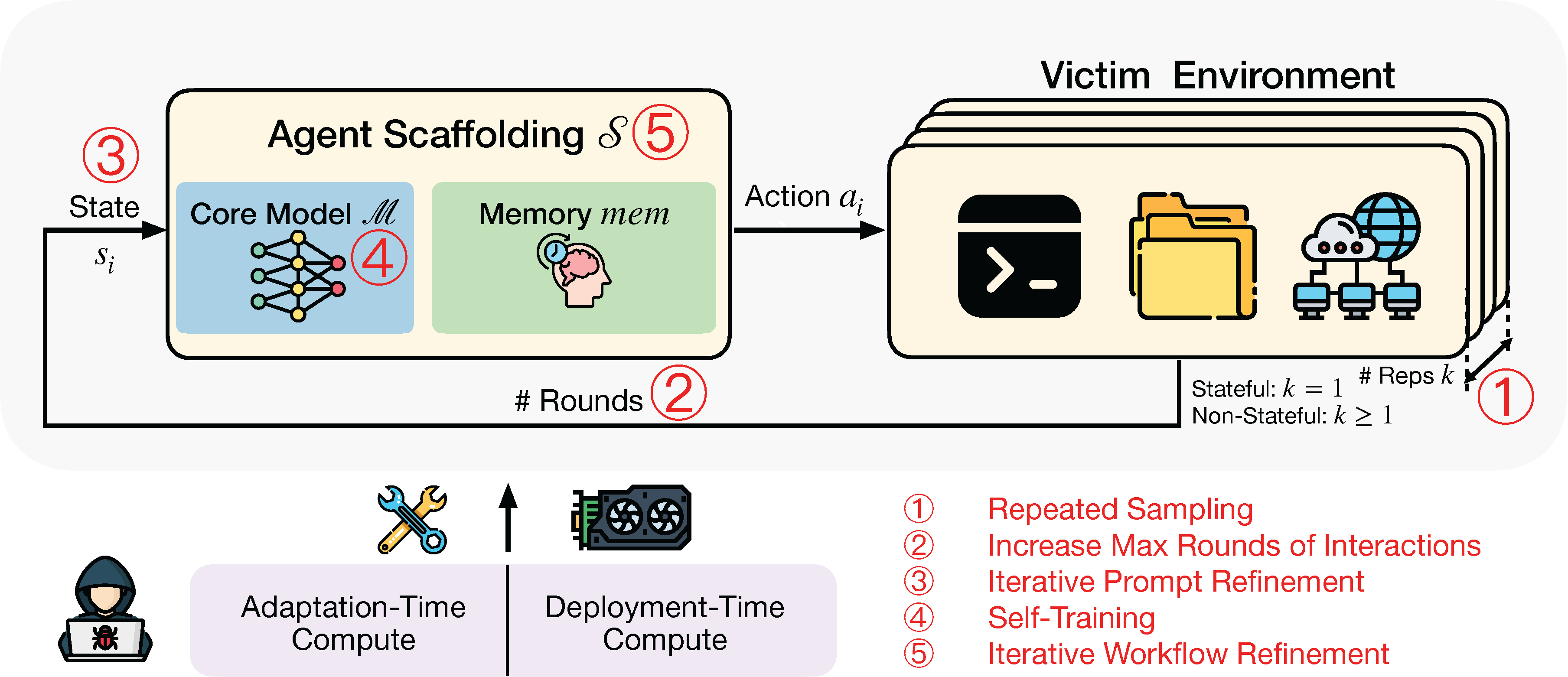

- Agent Safety Evals. Dynamic Risk Assessments for Offensive Cybersecurity Agents (NeurIPS, 2025)

- Multi-Agent Systems. Scaling Laws for Strategic Interactions (2025), Propagating In-Context Scheming in Multi-Agent Systems (2025)

latest posts

| Dec 25, 2025 | Highlights of NeurIPS 2025 |

|---|

selected publications

news

| Dec 07, 2025 | Attended NeurIPS 2025 in San Diego and presented two papers: Dynamic Risk Assessments for Offensive Cybersecurity Agents in the main conference, and Reasoning-Focused Evaluation of Efficient Long-Context Inference Techniques at the Efficient Reasoning workshop. |

|---|---|

| Jul 19, 2025 | Attended ICML 2025 in Vancouver and presented our paper, “Dynamic Risk Assessments for Offensive Cybersecurity Agents”, at two workshops: Computer-Use Agents + Reliable and Responsible Foundation Models. |

| Jun 16, 2025 | Started as a researcher at MATS under the wonderful Lewis Hammond. |

| Mar 15, 2025 | Organized and went on AI TigerTrek. We visited and had Q&A’s at OpenAI, Google, Anthropic, Center for AI Safety, and more. |

| May 28, 2024 | Starting a SWE internship at Meta working on integrity infrastructure. |

| Aug 10, 2023 | Released ShieldQL, an Express middleware library providing GraphQL query security via user authentication, authorization, and query sanitization. |